Microsegmentation

This guide explains the concept of microsegmentation and shows you how to use Calico network policy to isolate and protect containerized applications.

You can get started with Calico by following our quickstart guide. You'll learn how to install Calico, secure a cluster with network policy, and monitor network traffic with Calico Whisker.

Overview

What is microsegmentation?

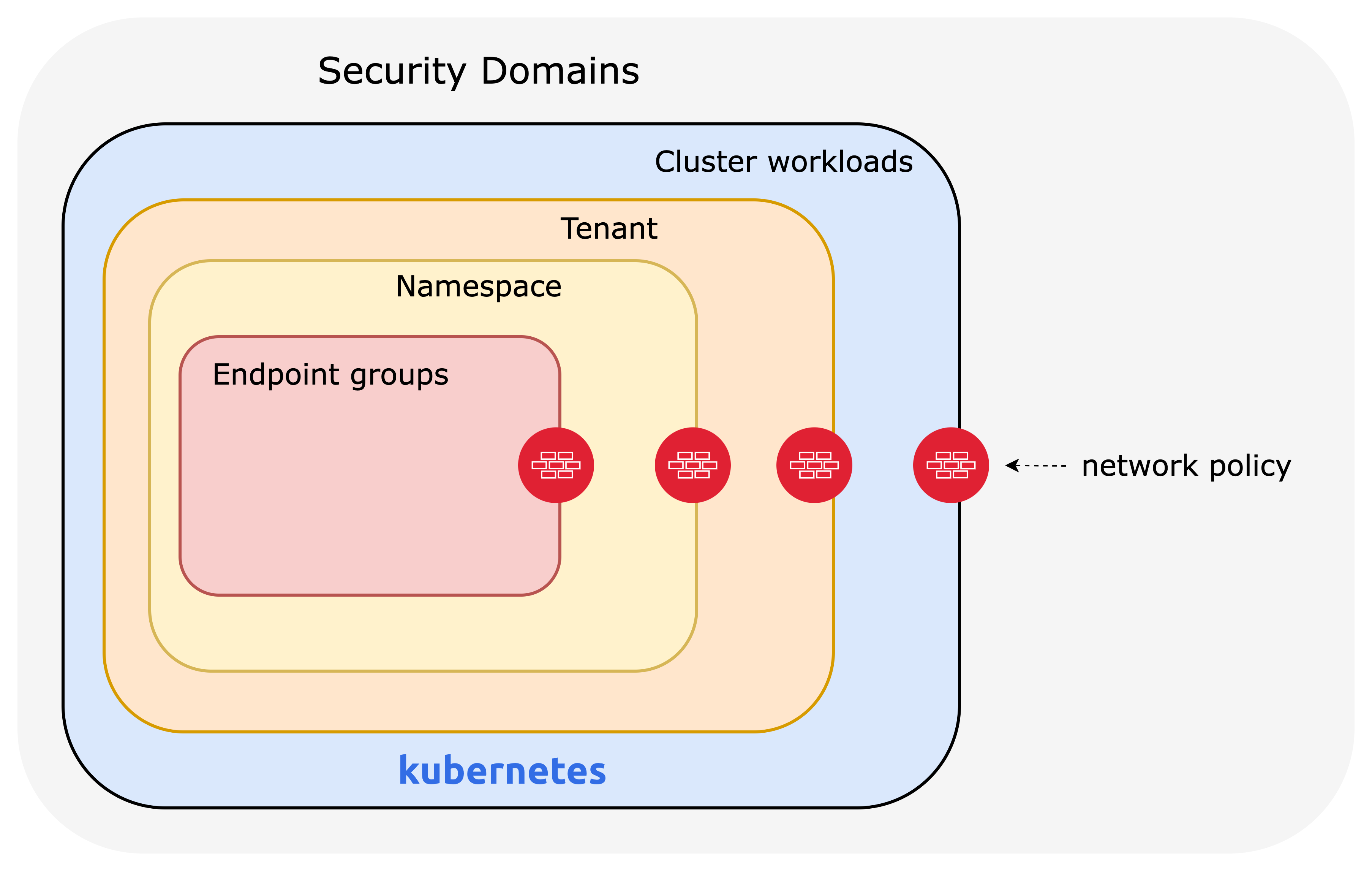

Microsegmentation extends traditional segmentation to containerized environments, providing granular isolation for enhanced security in Kubernetes. Unlike legacy methods that focus on perimeter security, microsegmentation leverages network policies to secure assets at a finer level, from workloads to namespaces, based on label selectors. By limiting communication between segments, it isolates critical security domains, stops lateral movement, and ensures compliance.

Why use Calico for microsegmentation?

Anyone who has a need to segment containerized systems in their organization can use Calico's network policies to isolate, protect, and secure multiple security domains, such as Kubernetes workloads, namespaces, tenants, and hosts. Traditional firewalls are not designed with dynamic, containerized environments in mind. Instead, microsegmentation is implemented using purpose-built network policies that allow for different levels of granularity to secure workloads, applications, namespaces, or clusters.

Calico has a range of functionality that makes implementing microsegmentation in Kubernetes easier and faster.

In open-source Calico (Project Calico) and commercial Calico products, you take advantage of Calico network policy, which extends functionality of Kubernetes network policy. Some of these key features include using Calico network policies to protect more endpoints, write global policies that apply cluster-wide, and additional policy actions such as deny and log.

Commercial Calico products (Calico Enterprise and Calico Cloud) compliment the additional network policy capabilities with an easy-to-use browser-based UI that includes observability, enhanced policy management, policy recommendations, and more.

More detailed descriptions of functionality and how to implement microsegmentation with Calico can be found further on in this document.

Microsegmentation at different levels

When organizations need to segment and isolate their Kubernetes environments, this is typically done at three different levels: Workload Isolation, Namespace Isolation and Tenant Isolation.

Workload isolation

Kubernetes network policy is defined to secure specific microservices within a tenant or namespace. Isolating individual workloads helps further reduce the attack surface and prevents lateral movement between workloads or potential performance issues. A network policy designed for workload isolation contains more restrictive ingress and egress rules, only allowing essential communication between microservices. For example, an application may contain frontend and backend workloads within the same namespace. If the frontend is public, and more likely to be breached, apply a microsegmentation strategy where communication between the frontend and backend is restricted. If the frontend is compromised, the likelihood of the malicious actor obtaining sensitive information from the backend is significantly reduced. Perimeter (namespace) security approaches are inadequate in this situation.

Namespace isolation

In Kubernetes, namespaces are used to separate different software applications or processes from each other by assigning them unique namespaces. This helps to prevent conflicts or interference between different applications that may be running on the same system, and owned by different users. Isolating and securing applications in their namespaces means developers can ensure that their software operates independently and securely without the ability to impact other namespaces in the cluster. If one application in a namespace is compromised, having secured and isolated namespaces reduces and contains the “blast radius” of that threat.

Tenant isolation

When cluster infrastructure is shared between tenants, there may be an organizational or compliance requirement for isolation. A tenant may contain several resources, including workloads or namespaces (secured or unsecured), and still require that perimeter security apply to the context of the tenant. This protects each tenant from lateral movement at an infrastructure level where a malicious or opportunistic actor may seek to obtain or steal high value assets, damage or misuse applications. If one tenant is compromised, the risk to other tenants on the same infrastructure is significantly reduced.

Foundational concepts for microsegmentation

Before implementing a segmentation strategy in a cluster there are a few key high-level concepts that must be understood. These are:

- Security domains

- Network security

- Default deny

If you are a Calico Enterprise or Calico Cloud user there are more features available that enable a faster and easier microsegmentation experience.

Security domains

Anyone implementing microsegmentation and zero-trust should first identify all the security domains that need to be secured, which is what you need to secure. A security domain could be any of the following:

Clusters: The cluster can be considered a security domain.

Tenants: Each tenant can be considered a separate security domain. A tenant may be comprised of a team or individuals that share the same Kubernetes infrastructure. It is a collection of applications or namespaces for which a specific security declaration is enforced.

Namespaces: Microservices that make up an application are typically organized into a single namespace and can be treated as separate security domains. For example, you can consider protecting an application from other applications in the cluster or the same tenant.

Endpoints A microservice comprises endpoint groups such as Deployment, StatefulSet, and DaemonSet resources. Microservices, even in the same namespace, must be protected from each other to limit exposure if one is compromised. For example, consider a frontend and a backend microservice in the same namespace. They are in separate security domains and must be protected by network policies.

Calico's enhanced network policy capabilities

Successful segmentation is achieved by implementing network policies that secure security domains at the correct granularity depending on an organization's security posture or compliance requirements.

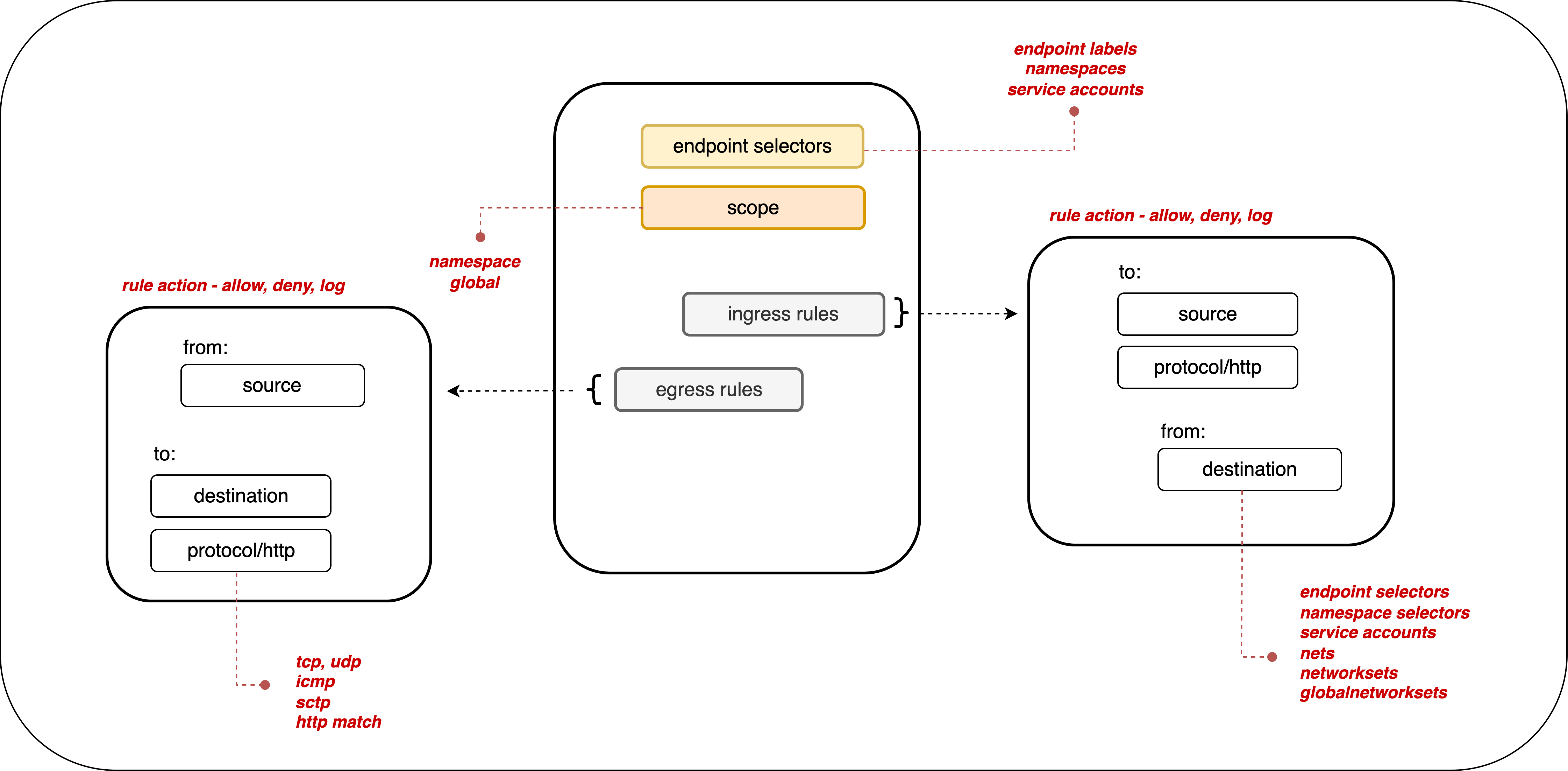

Calico’s network policies provide a richer set of policy capabilities than standard Kubernetes network policies. Calico's network policies include:

- policy ordering/priority

- deny rules

- more flexible match rules

- applicable to multiple endpoints (pods, VMs, and host interfaces).

There is a more detailed list of Calico network policy features available here. The Kubernetes documentation outlines what network policies do and do not support.

Project Calico integration with Istio providers application security, and service mesh. This integration enables layers 5–7 network policy match criteria, end-to-end mTLS encryption, and cryptographic identity. For more information, read this blog to learn how to integrate Kubernetes RBAC and Calico to achieve shift-left security.

Calico network policies are:

- Declarative - define security intentions in YAML files (or by creating a policy from the Calico Cloud or the web console)

- Label-based - network policies apply to endpoints based on workload identity using label selectors. These can be combined into larger expressions using multiple operators and parentheses.

- Dynamic - network policies are tightly coupled with workloads based on their identity, not ever-changing IP addresses

All Calico products support label-based policies that can be applied to either a namespace or cluster-wide (global) scope.

By default, if no policies exist, then all ingress and egress traffic is allowed to and from pods in that namespace.

Default deny

In Kubernetes, the default allows all traffic, unless policies apply to it. Therefore, we recommend creating a default deny policy for your Kubernetes pods. This guarantees that if no other policy is defined that explicitly allows traffic to/from a pod, then the traffic will be denied.

Note that an implicit default deny policy always occurs last. If any other policy allows the traffic, then the deny does not come into effect. The deny is executed only after all other policies are evaluated.

A global default deny policy avoids needing to define a policy every time a namespace is created. It also forces tenants of the cluster to define a network policy for every new pod. However, ensure you have the correct allow policies in place to ensure control plane traffic does not get blocked.

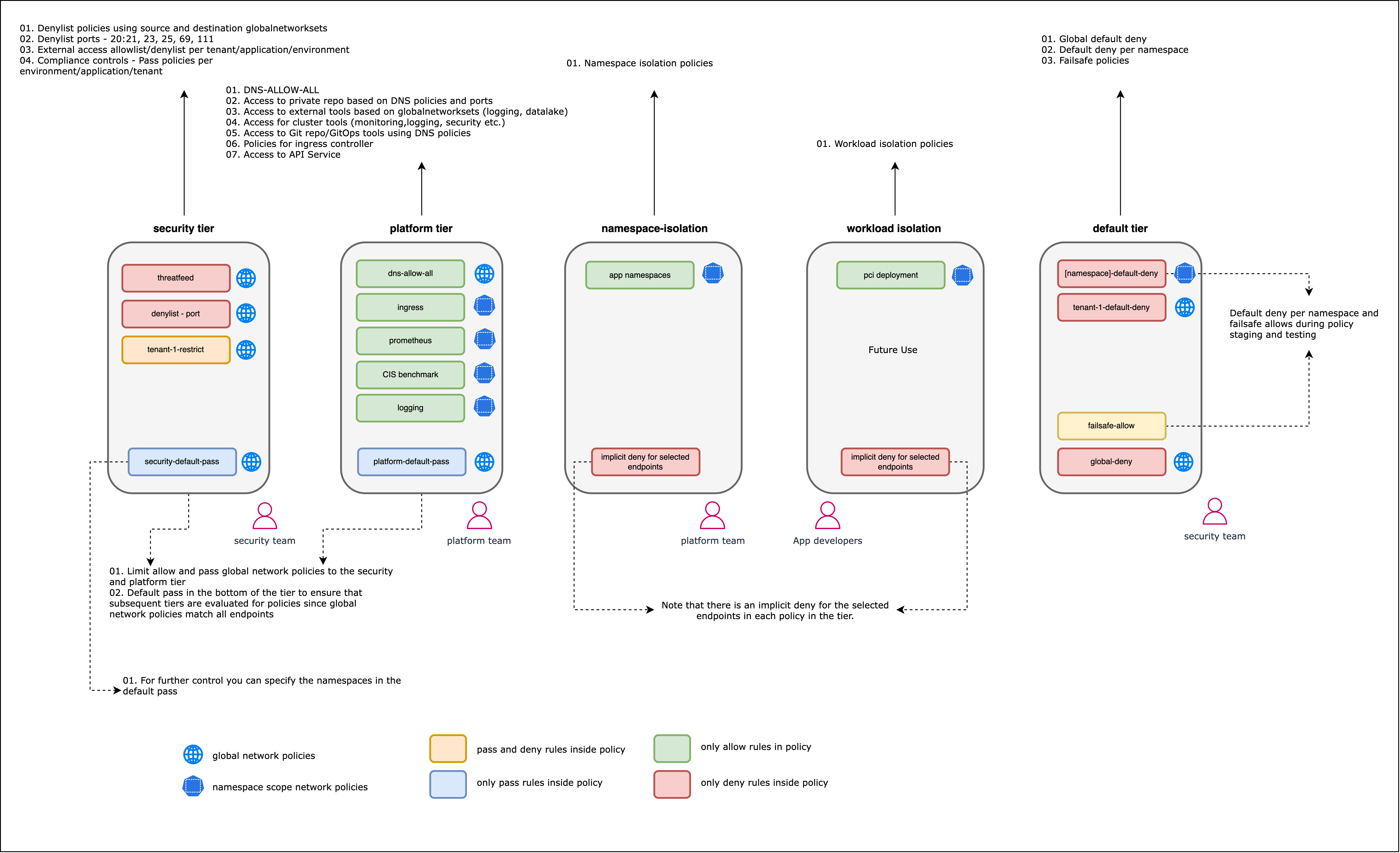

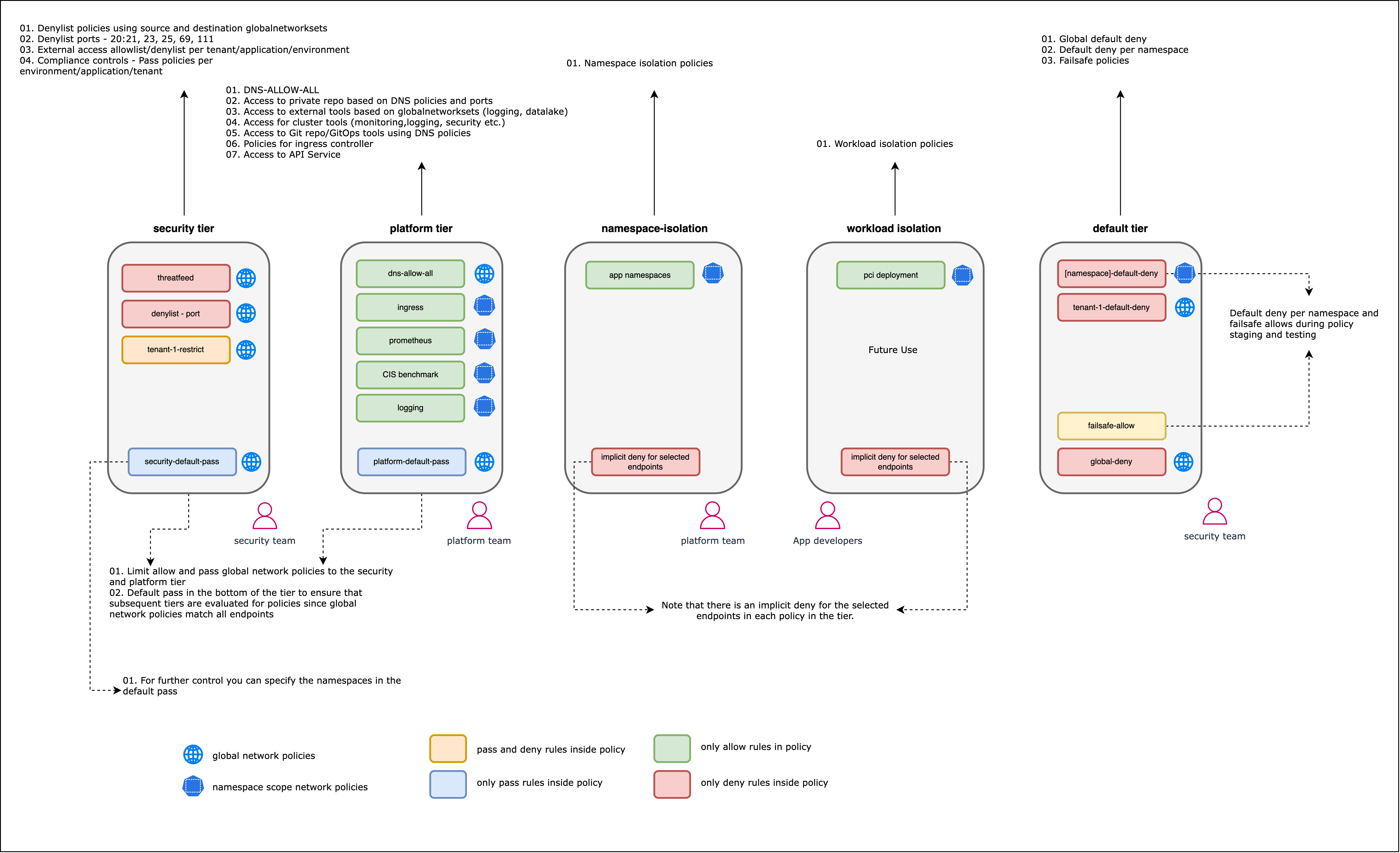

Additional functionality with Calico Enterprise and Calico Cloud

Calico Enterprise and Calico Cloud extend network policy capabilities and usability to support hierarchical tiered network policy, automatic network policy recommendations (including automatic namespace isolation) based on existing flows, and integrations with third party firewalls. Network policies can be viewed and managed through a user interface, with the ability to stage and preview policy impact before enforcement.

To achieve something similar to tiers, Project Calico users may want to read this blog.

This enables users to manage policies at scale, leading to more efficient management and implementation of a microsegmentation strategy.

The diagram below shows an example of how policies for different security domains are grouped and organized into tiers with Calico Cloud or Enterprise.

Global network policies

Calico supports global network policy kind: GlobalNetworkPolicy; a non-namespaced resource containing rules which are applied to any endpoints (pods, VMs, host interfaces) that match a selector.

Network policy is a namespaced resource that only applies to workload endpoint resources (pods, containers, VMs) within that namespace.

Global policies are beneficial in reducing the number of policies that need to be written and managed by targeting assets that span across a cluster or multiple namespaces. An example of this might be creating one default deny policy instead or one policy that allows communication to system services, like kube-dns. The alternative would be using Kubernetes network policies and duplicating policies for each namespace within the cluster.

Network policy management

Network policy can quickly become complex and hard to manage. There are a number of tools that make it easier to understand what your policies are doing and help you make adjustments.

Policy ordering

In Calico, you can use the order field (with precedence from the lowest value to highest) to control how policies are applied and evaluated.

Defining policy order is important when you include both action: allow and action: deny rules that may apply to the same endpoint.

In Calico, you can use the order field (with precedence from the lowest value to highest) to control how policies are applied and evaluated.

Defining policy order is important when you include both action: allow and action: deny rules that apply to the same endpoint.

If no network policies apply to a pod, then all traffic to that pod is allowed.

If one or more network policies apply to a pod containing ingress rules, then only the ingress traffic specifically allowed by those policies is allowed.

If one or more network policies apply to a pod containing egress rules, then only the egress traffic specifically allowed by those policies is allowed.

Policy tiers

Calico Cloud and Calico Enterprise allow hierarchical grouping of policies into tiers. Policy tiers allow enforcement of higher-precedence policies that cannot be circumvented by other teams. Policy tiers are evaluated based on order, as are policies within each tier. Graphically, policies are evaluated from left to right, top to bottom. RBAC for each tier can be defined to restrict who can interact with each tier.

While Calico Open Source does not support policy tiers, you can use RBAC to control how different users can shape cluster security.

Staged policies

These rules are used to preview network behavior and do not enforce network traffic, and can be applied to both network or global policies.

Staged policies let you test the traffic impact of the policy as if it were enforced, but without changing traffic flow. You can also preview the impacts of a staged policy on existing traffic. By verifying that correct flows are allowed and denied before enforcement, you can minimize misconfiguration and potential network disruption.

Implementing microsegmentation with network policies

To implement microsegmentation, you should follow a structured and repeatable approach to increase the likelihood of success. These can be summarized as four broad steps:

-

Identify the security domains for which microsegmentation will be enforced, who will be responsible for them, and who or which services need access to those security domains.

-

Define a policy model using documented microservice communication for your applications or by analyzing traffic flows. When defining policies you should also consider the scope of the policies (global or namespace), who will be writing and applying the policies, and policy order (or tiers).

-

Author and deploy network policies. Once all the correct allow policies are in place, stage a default deny policy. You may want to identify a low-impact application or security domain first to understand and evaluate the process before prioritizing segmentation of critical security domains.

-

Re-assess any flows or new applications that may require policy remediation before enforcing a default-deny. In Calico Open Source, where staged policies are not supported, enforce a default deny in a staging environment to correct any policies prior to enforcing in production.

Identify your security domains

When identifying security domains or applications to secure, you may also want to consider compliance requirements and how critical your applications are. For more information on meeting compliance requirements beyond microsegmentation, review one of our white papers. This will be unique to your organizational requirements, security posture, and risk tolerance.

An organization should know if they have critical applications that require a strong security posture, where the effort to protect outweighs the risk of having an application compromised. This will require a more fine-grained security approach and might need policies in place for multiple security domains: cluster, tenants, namespaces, and workloads. In a low-risk environment that doesn't contain critical or sensitive data, you may determine that namespace or tenant security domain isolation is sufficient.

Starting with a low-risk implementation in a staging environment allows you to de-risk the process before applying it to business-critical security domains.

Things you should consider:

- Compliance requirements

- Vulnerability or susceptibility to attack

- Organizational security posture (zero-trust will require more stringent segmentation)

- Importance of applications or security domains

Develop a policy framework

Once the security domains are identified, you should start designing and developing your network policies. Work from the highest-level policies (broadest scope) to the lowest level, more fine-grained policies. In Calico Enterprise or Calico Cloud, it will be easier to think in tiers, and design policies tier-by-tier, from left to right across the policy board. The left-hand side of the board contains tiers and policies with higher precedence that apply to the cluster or platform, or a broad subset of assets within them. As you move right across the policy board, tiers should focus on specific namespaces or applications and the network policies will reflect that.

Refer to this diagram as a skeleton framework for developing a policy framework:

Before you can write the network policies, you will need to know how to target specific assets (labels, service accounts or namespaces) and what rules to write (based on cluster traffic):

Analyze cluster traffic

To define a network policy model, you need to know what the expected communication is within a security domain and design policies to protect those domains. You may need to consider:

- Will you use global or namespaced policies?

- Do you need to protect hosts (and configure that)?

- Who is responsible for defining policies for different security domains?

- What order should policies be applied in?

- Policy tiers, for Calico Cloud or Calico Enterprise users.

Policy models may be easier to produce if an application has documentation stating how microservices communicate over which protocols and ports. A ‘shift left’ approach also puts the onus on individuals or developers who are more familiar with communication dependencies and can define accurate policy.

If you’re using Calico Open Source and struggling to analyze traffic flows, you may find it easier to install a text scraping tool (such as Fluent Bit) and pass log information to a text processing application (such as Elastic). This lets you see layer 2-3 logs and build policies based on that information.

Calico Cloud and Enterprise users have multiple tools available to analyze flows: Dynamic Service and Threat Graph is a topographical visualization of workload communication. Through this feature, you can access flow logs that show metadata for source and destination endpoints, as well as any policies that apply to the flow and the outcome. Flow Visualization shows volumetric flow data within the cluster in a 360’ view. FlowViz, in addition to Service Graph, allows users to see what flows exist within a cluster or namespace, metadata and selectors associated with endpoints and any applied policies. Elasticsearch and Kibana are included with Calico Cloud and Calico Enterprise that enables users to explore Elasticsearch logs and gain insights into workload communication traffic volume, performance, and other key aspects of cluster operations. Log data is also summarized in custom dashboards.

Label your assets

As network policies are identity-aware, it is likely assets within a cluster will be targeted using labels. Additionally, Calico global network policies can target service accounts or namespaces. As such, before authoring any policies, ensure all assets are appropriately labeled so that policy selectors can target the correct assets at the right level of segmentation.

In an example applying microsegmentation to a demo storefront, every pod within a hipstershop namespace is labeled with a compliance label in addition to an 'app' label denoting what service the pod is. This allows for multiple policies. One network policy could be designed, allowing communication between all pods with a generalized label. Other network policies could be created for each workload, using the 'app' label to target specific workloads and create fine-grained ingress and egress rules. This allows flexibility in the approach to microsegmentation depending on which security domains need protecting.

When designing and applying labels, you should:

- Integrate label design into the identification step, creating a hierarchical design that aligns with security domains.

- Use label prefixes for classification to create a standardized approach depending on the security domain. Consider prefixing labels with the intended security domain, and designing appropriate key/value pairs that accurately represent the tenant, application, namespace, or endpoint.

- Don't use reserved label keys, such as

kubernetes,tigeraorcalico. - If using a CI/CD pipeline, ensure the right labels are being applied and set up label governance checks. This will ensure new deployments are appropriately secured from the beginning.

More information is available on labeling best practices.

Write your policies

The previous steps should set you up for success when creating policies because you will know exactly what policies need to be created, with what order and tier, and what labels to use as selectors.

Before you write any policies, you may want to review the best practices for network policies in: Calico Open Source, Calico Enterprise, Calico Cloud.

To make policy writing easier and faster, Calico Enterprise and Calico Cloud have several features you may want to consider: Graphical Policy Editor: Calico Cloud and Calico Enterprise have a ‘Create Policy’ GUI to create, edit, and delete policies. Policies that have been created in the GUI can be downloaded as YAML.

DNS Policies: If you need to limit traffic to/from external non-Calico workloads or networks, you can use external IPs or network rules.

Automatic policy recommendations: Calico Cloud and Calico Enterprise also support automatic policy recommendations that automatically generate network policy to isolate namespaces. Policy recommendations can also be generated for specific namespaces or workloads for a more granular segmentation strategy. Policy Recommendations makes it easier and faster for platform operators to implement namespace isolation at scale or without experience in authoring network policies or detailed knowledge of how application workloads are communicating. Calico analyzes the flow logs that are generated from workloads, and automatically recommends and stages policies for each namespace that can be used for isolation. All recommended polices are staged by default. This allows you to preview the impact of a policy without impacting traffic flow, to assess the effectiveness of a policy, or to evaluate any unintended side-effects. Policy recommendations need to be enabled per cluster and may take time to learn and analyze flows before providing recommendations.

All versions of Calico support both Kubernetes network policy and Calico network policy.

Deploy network policies

In all products, policies can be applied using the CLI. Policies created in the web console for Calico Enterprise or Calico can be staged or enforced through the UI. You can check whether policies have been applied by running the following commands:

kubectl get networkpolicy

kubectl describe networkpolicy <networkpolicy-name>

or

kubectl get globalnetworkpolicy

kubectl describe globalnetworkpolicy <globalnetworkpolicy-name>

When applying network policies, you will need to apply policies in such an order that it does not impact applications. Calico network policy supports policy ordering when traffic is being evaluated. Calico Enterprise and Calico Cloud also support hierarchical policy tiers.

With Calico Open Source you may want to define the policy order in the policy spec in addition to applying policies in the correct order.

Calico Enterprise and Calico Cloud feature hierarchical tiers that are visible on the policies board. Any applied policies, both staged and enforced, are visible on the policies board in the correct order.

It is important to understand how policy endpoints match across tiers.

- Apply policies by any method. Start with general policies that target broad security domains.

- Verify policies are working as intended before you apply narrower policies, such as those at the application or workload level.

- Ensure policies allow communication to critical services and control plane services (such as kube-dns).

- Confirm that policies exist in the correct order by using CLI tools or the web console's policy board.

- Enforce any staged policies.

Enforce a default deny policy

The default behavior in Kubernetes is to allow all communication that isn't restricted by a network policy. A default deny policy ensures that unwanted traffic (ingress and egress) is denied by default. You must create policies to allow traffic to your pods and services. Pods without a policy (or that have an incorrect policy) are blocked from all network traffic until an appropriate network policy is defined.

Note that Calico global network policies are not namespaced. They affect all pods that match the policy selector. In contrast, Kubernetes network policies are namespaced To achieve the same effect, you would need to create a default deny policy for every namespace in your cluster.

This also comes with the warning that you need to ensure the default deny does not target system pods that are critical to cluster integrity. The following policy correctly excludes system pods from the global deny rule:

apiVersion: projectcalico.org/v3

kind: GlobalNetworkPolicy

metadata:

name: deny-app-policy

spec:

namespaceSelector: has(projectcalico.org/name) && projectcalico.org/name not in {"kube-system", "calico-system", "tigera-system"}

types:

- Ingress

- Egress

egress:

# allow all namespaces to communicate to DNS pods

- action: Allow

protocol: UDP

destination:

selector: 'k8s-app == "kube-dns"'

ports:

- 53

- action: Allow

protocol: TCP

destination:

selector: 'k8s-app == "kube-dns"'

ports:

- 53

Calico Enterprise and Calico Cloud have a 'hidden' tier that contains policies to secure Calico components that have precedence over any user-created policies or tiers. To ensure your cluster continues to work correctly, don't modify or circumvent these policies.

Calico Open Source: Enable default deny for Kubernetes pods

In Calico Cloud and Calico Enterprise the staging policy tool will help you find incorrect and missing policies A global deny rule helps mitigate other lateral malicious attacks.

We recommend that you create a global default deny policy after you complete writing policy for the traffic that you want to allow. Use the staged policy feature to get your allowed traffic working as expected, and then lock down the cluster to block unwanted traffic. The following steps summarize best practice:

- Create a staged global default deny policy. It will show all the traffic that would be blocked if it were converted into an enforced deny policy.

- Create other network policies to individually allow the traffic shown as blocked, until no connections are denied.

- Convert the staged global network policy to an enforced policy.

Steps to an enforced default-deny posture:

- Create your allow policies. You can't predict everything, but try to get as much of this in place as you can.

- Create a default deny policy.

If globally scoped, create one policy.

For namespace-scoped default deny policies, create one policy per namespace.

- In Calico Enterprise or Calico Cloud, stage the default deny policy either through the UI or by setting

kind: StagedGlobalNetworkPolicyorkind: StagedNetworkPolicy. - In Calico Open Source, you should apply the default deny policy in a non-production environment first. You may optionally include a 'log' action and have flows that are evaluated by the default deny recorded in the syslog.

- In Calico Enterprise or Calico Cloud, stage the default deny policy either through the UI or by setting

- Simulate your application or services. Validate all policies are working as intended, and there are no issues with the application or service.

- Once everything is validated, the default deny policy can be enforced or applied in a production environment.

Monitor and fine-tune your policies

After you have policies in place, you can actively monitor traffic and performance to make sure your policies are behaving as you expect.

There are a few ways to monitor and review whether your policies are effective.

Review the policies board

In Calico Cloud and Calico Enterprise, the policies board is the first place to look for misconfigurations. From here, you can spot policies that are denying traffic or that have no endpoints assigned to them.

Use observability tools

Calico Enterprise and Calico Cloud have tools that help you visually identify misconfigured policies These include Service Graph, FlowViz, and the policy board. Commercial Calico products also include Kibana as a frontend for Elasticsearch. This provides richer functionality for dashboards and logs. For an in-depth demonstration, see this video series.

Test connectivity with test pods

One way to test connectivity is to deploy test pods in different security domains and see whether they can connect to one another. You can label these pods to mimic other services and trigger specific policies. When the pods are deployed, you can use tools such as curl or netcat to check that connectivity is working as expected.

The response can be used to determine if a network policy is correctly denying or allowing traffic to pass through, and this can be used in conjunction with flow logs or observability.

Read the logs

Calico supports a log action for its network policies. Calico Enterprise and Calico Cloud can collect a wide range of logs that can be used to investigate flows and validate policies.

Simulate your application or service

Using and testing the deployed application and a wide range of its functionality should show whether there are any issues after deploying and enforcing network policies.

If you need to create new policies or edit existing policies, refer to the sections above.